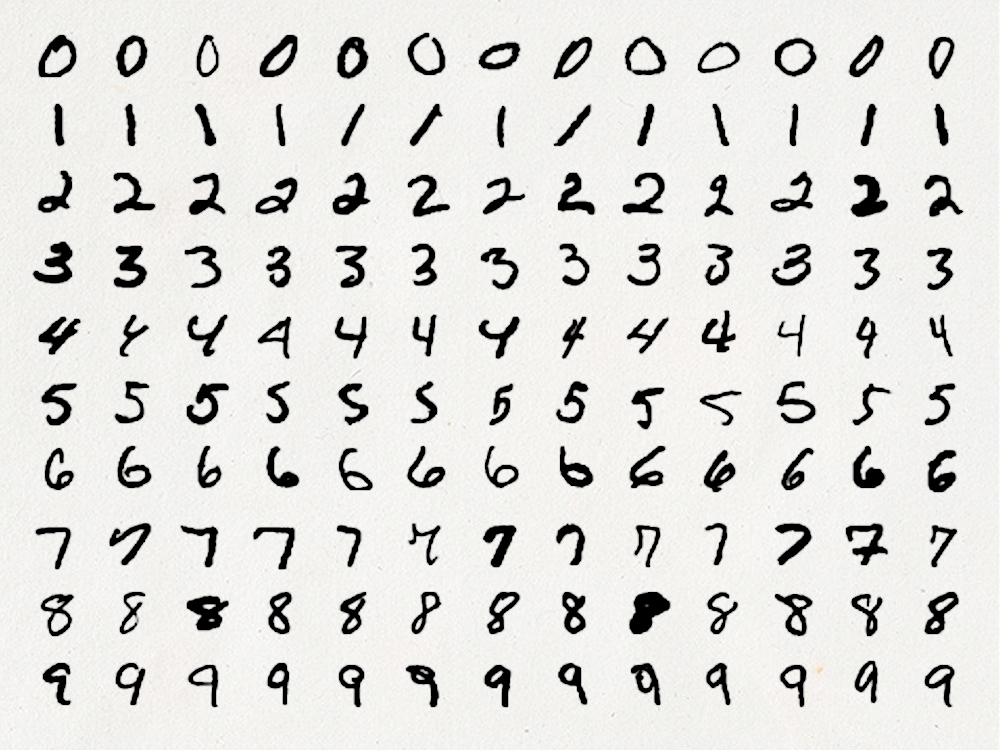

Is it Time to Ditch the MNIST Dataset?

The MNIST dataset is the bread and butter of deep learning. Featuring 70,000 handwritten, numerical digits partitioned into a training and testing set, the dataset is the go to candidate for a large proportion of introductory tutorials, benchmarking tests, and data science showcases. This post questions the suitablility of this dataset for such uses, attributing this shortcoming to the excessive simplicity of the challenge it presents when tackled with modern machine learning tools. Additionally, we look at alternatives to the dataset that demonstrate a more appropriate challenge without fundamentally changing the learning problem.

Efficiently Removing Zero Variance Columns (An Introduction to Benchmarking)

There are many machine learning algorithms, such as principal component analysis, that will refuse to run when faced with columns of data that have zero variance. There are multiple ways to remove these in R, some much faster than others. In this post, I introduce some such methods and demonstrate how to use the `rbenchmark` package to evaluate their performance.